Artificial intelligence (AI) and machine learning are reshaping numerous facets of modern life, spanning entertainment, commerce, and significantly, healthcare. From personalized recommendations on streaming platforms to tailored shopping experiences, AI’s ability to analyze vast datasets and derive meaningful insights is transforming industries. In healthcare, the potential of AI is particularly profound, offering promising advancements from diagnostics to treatment strategies. There’s a growing consensus that AI tools will serve to augment and enhance the capabilities of healthcare professionals, rather than replace them. AI is poised to support healthcare personnel across a spectrum of tasks, ranging from streamlining administrative processes and clinical documentation to enhancing patient engagement and providing specialized assistance in areas like image analysis, medical device automation, and continuous patient monitoring. This article explores the significant applications of AI within healthcare, encompassing direct patient care and crucial aspects of the healthcare value chain, such as pharmaceutical development and ambient assisted living technologies.

Keywords: Artificial intelligence, healthcare applications, machine learning, precision medicine, ambient assisted living, natural language processing, machine vision

The Dawn of a New Healthcare Era

The pervasive influence of big data and machine learning is undeniable, impacting sectors from entertainment and retail to the critical field of healthcare. Just as streaming services predict viewing preferences and online retailers anticipate purchasing habits, search engines analyze symptom queries, revealing potential healthcare trends. This extensive data analysis facilitates detailed personal profiling, invaluable for understanding behavior and targeted interventions, with significant implications for predicting and managing healthcare trends. The optimism surrounding artificial intelligence (AI) in healthcare stems from its potential to revolutionize diagnostics and treatment across the board. Compelling evidence already indicates that AI algorithms can perform at levels comparable to or exceeding human capabilities in tasks such as analyzing medical images and correlating patient symptoms with disease prognosis using electronic medical records (EMRs) [1].

The escalating demand for healthcare services, coupled with a growing shortage of healthcare professionals, particularly physicians, presents significant challenges globally. Healthcare institutions are also under pressure to integrate rapidly evolving technologies and meet rising patient expectations for service quality and outcomes, mirroring experiences in consumer-centric industries. Advances in wireless technology and smartphone capabilities have paved the way for on-demand healthcare services via health tracking applications and digital platforms. This has fostered a new paradigm in healthcare delivery through remote interactions, accessible anytime and anywhere. Such services are particularly beneficial in underserved regions lacking specialist access and can reduce costs and minimize exposure to contagious illnesses in clinical settings. Telehealth solutions are also crucial for developing countries expanding their healthcare infrastructure to meet contemporary needs [3]. However, rigorous independent validation is essential to confirm the safety and efficacy of these innovative approaches.

The healthcare ecosystem is increasingly recognizing the pivotal role of AI-driven tools in shaping the next generation of healthcare technology. AI is believed to offer improvements across all facets of healthcare operations and service delivery. The potential for cost savings through AI implementation is a major driver for its adoption in healthcare. Projections estimate that AI applications could reduce annual US healthcare costs by USD 150 billion by 2026. A substantial portion of these savings is expected to arise from shifting the healthcare model from a reactive, disease-treatment focus to a proactive, health-management approach. This transition is anticipated to lead to fewer hospitalizations, doctor visits, and treatments. AI-based technologies are poised to play a crucial role in promoting preventative health through continuous monitoring and personalized coaching, facilitating earlier diagnoses, tailored treatments, and more efficient follow-up care.

The healthcare AI market is experiencing rapid expansion, projected to reach USD 6.6 billion by 2021, with a compound annual growth rate of 40% [4].

Technological Leaps in AI

The past decade has witnessed remarkable technological advancements in AI and data science. While AI research across various sectors has been ongoing for decades, the current surge in AI adoption is distinct. The convergence of enhanced computer processing power, expansive data repositories, and a growing pool of AI talent has spurred the rapid development of AI tools and technologies, including within healthcare [5]. This confluence is set to trigger a paradigm shift in AI technology adoption and its societal impact.

The emergence of deep learning (DL) has particularly transformed the perception of AI tools and is a key factor driving the current enthusiasm for AI applications. DL enables the identification of complex correlations previously unattainable with earlier machine learning algorithms. This is largely based on artificial neural networks, and unlike earlier networks with limited layers, DL networks boast ten or more layers, simulating millions of artificial neurons.

Leading companies like IBM Watson and Google’s DeepMind are at the forefront of this innovation. These entities have demonstrated AI’s capacity to surpass human performance in specific tasks, including strategic games like chess and Go. Both IBM Watson and Google’s DeepMind are being explored for numerous healthcare applications. IBM Watson is being utilized for diabetes management, advanced cancer care and modeling, and drug discovery, though definitive clinical benefits for patients are still under evaluation. DeepMind is also being investigated for applications including mobile medical assistants, medical image-based diagnostics, and predicting patient deterioration [6], [7].

Many technologies reliant on data and computation have followed exponential growth patterns, exemplified by Moore’s law, which describes the exponential increase in computer chip performance. Consumer applications offering affordable services have also experienced similar exponential growth. In healthcare and life sciences, the mapping of the human genome and the digitization of medical data hold the potential for comparable growth. As genetic sequencing and profiling become more cost-effective, and electronic health records become a standard platform for data collection, exponential growth is anticipated. While initial impacts may appear modest, exponential growth will eventually become transformative. Humans often struggle to grasp exponential trends, tending to overestimate short-term technological impacts (e.g., within a year) while underestimating long-term effects (e.g., over a decade).

Diverse Applications of AI in Healthcare

It’s widely accepted that AI tools are designed to augment and enhance human capabilities, not to replace healthcare professionals. AI is poised to assist healthcare personnel with diverse tasks, from administrative workflows and clinical documentation to patient outreach and specialized support in image analysis, medical device automation, and patient monitoring.

Opinions vary regarding the most impactful applications of AI in healthcare. Forbes highlighted administrative workflows, image analysis, robotic surgery, virtual assistants, and clinical decision support as key areas in 2018 [8]. An Accenture report from 2018 echoed these areas, adding connected machines, dosage error reduction, and cybersecurity [9]. McKinsey’s 2019 report emphasized connected and cognitive devices, targeted and personalized medicine, robotics-assisted surgery, and electroceuticals as significant applications [10].

The following sections will delve into major AI applications in healthcare, covering both direct patient care and supporting areas within the healthcare value chain, such as drug development and ambient assisted living (AAL).

Precision Medicine: Tailoring Treatment with AI

Precision medicine offers the promise of customizing healthcare interventions for individuals or patient groups based on their specific disease profile, diagnostic and prognostic information, and treatment responses. This personalized approach considers genomic variations alongside factors like age, gender, geography, race, family history, immune profile, metabolic profile, microbiome, and environmental exposures. The core aim of precision medicine is to shift from population-based to individual biology in all stages of patient care. This involves collecting individual data, such as genetic information, physiological monitoring data, or EMR data, and tailoring treatment using advanced AI models. Precision medicine offers several advantages, including reduced healthcare costs, minimized adverse drug reactions, and enhanced drug efficacy [11]. Innovations in precision medicine are expected to significantly benefit patients and fundamentally change healthcare delivery and evaluation.

Precision medicine initiatives are broadly categorized into three clinical areas: complex algorithms, digital health applications, and “omics”-based tests.

Complex algorithms: Machine learning algorithms analyze large datasets encompassing genetic information, demographic data, or electronic health records to predict prognosis and determine optimal treatment strategies.

Digital health applications: Healthcare apps collect and process patient-generated data, including dietary intake, emotional states, activity levels, and health monitoring data from wearables and mobile sensors. Within precision medicine, these apps utilize machine learning algorithms to identify data trends, improve predictions, and provide personalized treatment recommendations.

Omics-based tests: Genetic information from population cohorts is analyzed with machine learning algorithms to identify correlations and predict individual patient treatment responses. Beyond genetics, biomarkers such as protein expression, gut microbiome profiles, and metabolic profiles are also integrated with machine learning to facilitate personalized treatments [12].

The following sections explore specific therapeutic applications of AI, including genetics-based solutions and drug discovery.

Genetics-Based Solutions: Unlocking the Genome with AI

It’s anticipated that within the next decade, genome sequencing will become broadly accessible, potentially offered at birth or in adulthood. This comprehensive genome sequencing, generating 100–150 GB of data, presents a powerful tool for precision medicine. However, effectively integrating genomic and phenotypic information remains an ongoing challenge. Current clinical systems require redesign to fully leverage the potential of genomics data and realize its benefits [13].

Deep Genomics, a health technology company, is pioneering the use of AI to identify patterns within vast genetic datasets and EMRs, establishing links between these domains concerning disease markers. The company leverages these correlations to pinpoint therapeutic targets, both existing and novel, for developing individualized genetic medicines. AI is integral to every stage of their drug discovery and development process, including target identification, lead optimization, toxicity assessment, and innovative trial design.

Many inherited diseases manifest symptoms without a definitive diagnosis, and interpreting whole genome data remains complex due to the sheer volume of genetic profiles. Precision medicine, empowered by AI, offers methods to enhance the identification of genetic mutations through comprehensive genome sequencing.

AI in Drug Discovery and Development: Accelerating Innovation

Drug discovery and development is a protracted, expensive, and intricate process, often spanning over a decade from identifying molecular targets to market approval. Failures at any stage incur substantial financial losses, and many drug candidates fail during development and never reach the market. Adding to these challenges are increasing regulatory hurdles and the difficulty of consistently discovering drug molecules significantly superior to existing treatments. This makes drug innovation challenging, inefficient, and results in high costs for new drugs reaching the market [14].

Recent years have seen an exponential increase in data related to drug compound activity and biomedical information, driven by automation and novel experimental techniques. However, efficiently classifying potential drug compounds requires mining this extensive chemical data, and machine learning techniques have shown great promise [15]. Methods like support vector machines, neural networks, and random forests have been utilized since the 1990s to develop models aiding drug discovery. More recently, deep learning (DL) has gained prominence due to the increased data volume and advancements in computing power. Machine learning can streamline various tasks within the drug discovery process, including predicting drug compound properties and activity, de novo design of drug compounds, understanding drug-receptor interactions, and predicting drug reactions [[16]](#bib16].

Drug molecules and their associated features are converted into vector formats for machine learning systems. Data used typically includes molecular descriptors (e.g., physicochemical properties), molecular fingerprints (molecular structure), simplified molecular input line entry system (SMILES) strings, and grids for convolutional neural networks (CNNs) [17].

Predicting Drug Properties and Activity with AI

Understanding drug molecule properties and activity is crucial for assessing their behavior within the human body. Machine learning techniques are employed to evaluate biological activity, absorption, distribution, metabolism, and excretion (ADME) characteristics, and physicochemical properties of drug molecules ( Fig. 2.1 ). Chemical and biological data libraries like ChEMBL and PubChem, containing information on millions of molecules targeting various diseases, have become available. These machine-readable libraries are used to construct machine learning models for drug discovery. For example, CNNs have been used to generate molecular fingerprints from large sets of molecular graphs, capturing information about each atom in a molecule. These neural fingerprints are then utilized to predict new characteristics of given molecules. This approach enables the evaluation of molecular properties, including octanol, solubility, melting point, and biological activity, as demonstrated by Coley et al., allowing for the prediction of new drug molecule features [18]. These predictions can be combined with scoring functions to select molecules with desirable biological activity and physicochemical properties. Currently, many newly discovered drugs exhibit complex structures and/or undesirable properties, such as poor solubility, low stability, or poor absorption.

Figure 2.1.

Machine learning opportunities within the small molecule drug discovery and development process.

Machine learning is also utilized for toxicity assessment. DeepTox, a DL-based model, evaluates the toxic effects of compounds using a dataset containing numerous drug molecules [19]. MoleculeNet, another platform, translates two-dimensional molecular structures into novel features/descriptors for predicting toxicity. Built on data from public databases, MoleculeNet has tested over 700,000 compounds for toxicity and other properties [20].

De Novo Drug Design with Deep Learning

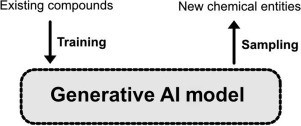

An exciting application of DL in drug discovery is the generation of novel chemical structures through neural networks ( Fig. 2.2 ). Several DL-based techniques have been proposed for de novo molecular design, including protein engineering for designing proteins with specific binding properties or functions.

Figure 2.2.

Illustration of the generative artificial intelligence concept for de novo design. Training data of molecular structures are used to emit new chemical entities by sampling.

Variational autoencoders and adversarial autoencoders are frequently used to automate the design of new molecules by fitting design models to large drug molecule datasets. Autoencoders, a type of neural network for unsupervised learning, are also used to generate realistic images, such as fictional human faces. Trained on extensive drug molecule structures, autoencoders use latent variables as generative models. For instance, druGAN, a program using adversarial autoencoders, generates new molecular fingerprints and drug designs incorporating properties like solubility and absorption based on predefined anticancer drug characteristics. These results indicate significant improvements in efficiency for generating new drug designs with specific properties [21]. Blaschke et al. applied adversarial autoencoders and Bayesian optimization to generate ligands specific to the dopamine type 2 receptor [22]. Merk et al. trained a recurrent neural network to capture a large number of bioactive compounds, such as SMILES strings. This model was then refined to recognize retinoid X and peroxisome proliferator-activated receptor agonists. Synthesized compounds demonstrated potent receptor modulatory activity in in vitro assays [23].

Drug-Target Interaction Prediction

Assessing drug-target interactions is a critical step in drug design. Binding pose and affinity between a drug molecule and its target significantly influence in silico prediction success. Molecular docking, a common approach, identifies drug candidates and predicts interesting drug-target interactions.

Molecular docking, a molecular modeling technique, studies binding and complex formation between molecules. It can identify interactions between a drug compound and a target, such as a receptor, and predict the drug compound’s conformation within the target’s binding site. Docking algorithms rank interactions using scoring functions and estimate binding affinity. Popular commercial molecular docking tools include AutoDock, DOCK, Glide, and FlexX. While effective, many data scientists are working to enhance drug-target interaction prediction using advanced learning models [24]. CNNs are valuable as scoring functions for docking applications, demonstrating efficient pose/affinity prediction for drug-target complexes and activity/inactivity assessment. Wallach and Dzamba developed AtomNet, a deep CNN, to predict the bioactivity of small molecule drugs for drug discovery. AtomNet outperformed conventional docking models in accuracy, achieving an AUC (area under the curve) of 0.9 or higher for 58% of targets [25].

Current trends in AI applications for drug discovery and development increasingly favor DL approaches. Compared to conventional machine learning, DL models require longer training times due to large datasets and numerous parameters. This can be a disadvantage when data is limited. Ongoing research focuses on reducing the data needed for DL training, enabling learning with smaller datasets, similar to human brain learning. This is particularly beneficial in resource-intensive data collection scenarios, such as medicinal chemistry and novel drug targets. Novel methods like one-shot learning, long short-term memory approaches, and memory-augmented neural networks like the differentiable neural computer are being explored [17].

AI and Medical Visualization: Enhancing Diagnostic Accuracy

Interpreting image and video data presents a significant challenge. Experts undergo extensive training to discern medical phenomena, requiring continuous learning to keep pace with new research and information. The increasing demand for expert analysis, coupled with a shortage of specialists, necessitates innovative solutions. AI offers a promising tool to bridge this gap.

Machine Vision for Enhanced Diagnosis and Surgery

Computer vision, involving machine interpretation of images and videos at or beyond human capabilities, is making significant strides in object and scene recognition. Key applications include image-based diagnosis and image-guided surgery.

Computer Vision in Diagnostic and Surgical Procedures

Historically rooted in statistical signal processing, computer vision is increasingly adopting artificial neural networks, particularly deep learning (DL), as its primary learning method. DL is used to develop computer vision algorithms for classifying images of lesions in skin and other tissues. Video data, estimated to contain 25 times the data volume of high-resolution diagnostic images like CT scans, offers rich temporal resolution and potentially greater data value. While video analysis is still developing, it holds significant promise for clinical decision support. For instance, real-time video analysis of laparoscopic procedures has achieved 92.8% accuracy in identifying procedural steps and detecting missing or unexpected steps [26].

A notable surgical application of AI and computer vision is augmenting surgical skills like suturing and knot-tying. The smart tissue autonomous robot (STAR) from Johns Hopkins University has demonstrated superior performance to human surgeons in certain procedures, such as bowel anastomosis in animals. While fully autonomous robotic surgeons remain a future concept, AI-augmented surgery is attracting significant research interest. A group at the Institute of Information Technology at the Alpen-Adria Universität Klagenfurt is using surgical videos as training data to identify specific surgical interventions. Their algorithms can recognize actions like dissection or cutting on patient tissues with high likelihood, along with the specific body region [[27]](#bib27]. These algorithms, trained on extensive video datasets, can be invaluable for complex surgeries or emergency situations requiring less experienced surgeons. Active surgeon involvement in developing these tools is crucial to ensure clinical relevance, quality, and seamless translation from lab to clinical practice.

Deep Learning for Medical Image Recognition

The term “deep” in deep learning refers to the multi-layered structure of these machine learning models. Among DL techniques, convolutional neural networks (CNNs) are particularly promising for image recognition. Yann LeCun, a pioneering computer scientist, laid the theoretical groundwork with LeNET in the 1980s, an automated handwriting recognition algorithm for financial systems. Since then, CNNs have shown remarkable potential in pattern recognition.

Mirroring radiologists’ training process of correlating image interpretations with ground truth, CNNs are inspired by the human visual cortex, where image recognition starts with identifying image features. CNNs require substantial training data in the form of labeled medical images. During training, CNNs adjust weights and filters (image region characteristics) at each hidden layer to improve performance.

Simply put ( Fig. 2.3 ), convolving an image with weights and creating filtered image stacks forms a convolutional layer. Pooling is then applied to reduce image representation and remove negative values using a rectified linear unit (ReLU). These operations are stacked to create “deep” layers. This process repeats, progressively filtering and reducing image size. The final “fully connected” layer integrates values from all layers to generate results. If the system’s answer is incorrect, gradient descent adjusts values via backpropagation, enabling “learning from mistakes.” After learning new capabilities, the system can classify new images (Inference), similar to a radiologist [28].

Figure 2.3.

The various stages of convolutional neural networks at work.

Adapted from Lundervold AS, Lundervold A. An overview of deep learning in medical imaging focusing on MRI. Z Med Phys. 2019;29:102–27.

Augmented and Virtual Reality in Healthcare

Augmented reality (AR) and virtual reality (VR) technologies have potential applications across the healthcare spectrum, from medical education to patient care. These systems can enhance training for medical students and experienced surgeons alike, while also offering benefits and some challenges for patients.

This section examines the applications of AR and VR in healthcare education, training, and patient experience.

Enhancing Education and Exploration with AR/VR

Humans are inherently visual learners, and play is a fundamental aspect of learning. Interaction with surroundings is crucial for understanding and gaining experience. Traditional medical education can be limiting for interactive disciplines like medicine. Medicine can be viewed as an art form, with clinicians as artists requiring specific skills in an evolving profession. Early in medical school, complex medical concepts are taught without real-world experience. Game-like technologies like VR and AR can enrich the learning experience for future medical and health professionals [29]. Medical students could learn complex surgical procedures or study anatomy through AR without early-stage patient interaction or cadaver dissection. While real patient interaction is essential, VR/AR can initiate training earlier and reduce later-stage training costs.

The same principle applies to specialist training. While human interaction is vital in medicine, it’s not always necessary during training regimens. Physical and digital cues like haptic feedback and photorealistic images/videos can create realistic simulations, fostering learning and reducing training costs ( Fig. 2.4 ).

Figure 2.4.

Virtual reality can help current and future surgeons enhance their surgical abilities prior to an actual operation. (Image obtained from a video still, OSSOR VR).

A recent study [30] compared surgical training methods for mastoidectomy. One group (n=18) received standard training, while another trained with a freeware VR simulator (the visible ear simulator – VES). VR-trained trainees showed significant improvement in surgical dissection skills. For precise real-world execution, AR offers advantages in healthcare. Lightweight headsets (e.g., Microsoft HoloLens, Google Glass) projecting relevant images or videos onto the user’s field of view allow focus on the task without visual distraction.

Improving Patient Experience with Immersive Technologies

Humans interact with their environment using audiovisual cues and physical movement. This seemingly ordinary ability is crucial for individuals with debilitating conditions limiting movement or experiencing pain and discomfort from chronic illness or treatment side effects. A study on chronic stroke patients showed that immersive VR positively impacted patient states. VR experiences, like virtually catching and throwing a ball [31], can act as a personal rehabilitation physiotherapist, engaging upper limb movement multiple times daily, potentially promoting neuroplasticity and motor function recovery.

For others, immersive technologies can help manage pain and discomfort from cancer or mental health conditions. Studies show that late-stage adult cancer patients can use VR technology with minimal discomfort, benefiting from relaxation, entertainment, and distraction [32]. Immersive virtual worlds offer escapism with artificial characters and environments, allowing interaction and exploration with audiovisual feedback, mimicking daily life activities.

Intelligent Personal Health Records: Empowering Patients

Historically, personal health records have been physician-centric, lacking patient-focused functionalities. To promote self-management and improve patient outcomes, patient-centric personal health records are essential. The goal is to empower patients to manage their health conditions while freeing up clinician time for critical tasks.

Health Monitoring and Wearable Devices: Continuous Data for Personalized Care

For millennia, individuals relied on physicians for information about their bodies. While this remains partially true, wearable health devices (WHDs) are changing this dynamic. WHDs are an emerging technology enabling continuous vital sign measurement across diverse conditions. Their flexibility is key to early adoption – users can track activity during running, meditation, or swimming. The aim is to empower individuals to analyze their data and manage their health proactively. WHDs foster individual empowerment ( Fig. 2.5 ).

Figure 2.5.

Health outcome of a patient depends on a simple yet interconnected set of criteria that are predominantly behavior dependent.

While appearing as simple bands or watches, WHDs bridge biomedical engineering, materials science, electronics, computer programming, and data science [33]. They can be considered ever-present digital health coaches, increasingly recommended for continuous wear to maximize data collection. Garmin wearables exemplify this, focusing on activity tracking across various sports and providing extensive data via the Garmin Connect application for user analysis. Gamification is increasingly integrated into these platforms.

Gamification applies game design elements to non-game contexts, motivating users to achieve goals [34]. On wearable platforms, daily activity data can fuel competition between users. For example, users with similar weekly step averages might be placed on leaderboards, encouraging increased activity to climb rankings and potentially improve health. While gamification in wearables has potential benefits, evidence of efficacy is limited and varies, with some suggesting potential harm.

Remote monitoring and early disease detection are highly beneficial for chronic conditions and the elderly. Smart devices or manual data entry over time enable communication with healthcare providers without disrupting daily routines [35]. This exemplifies algorithms collaborating with healthcare professionals to improve patient outcomes.

Natural Language Processing: Bridging the Communication Gap

Natural language processing (NLP) focuses on computer-human interaction using natural language, emphasizing computers’ ability to understand human language. NLP is crucial for big data analysis in healthcare, particularly for EMRs and translating clinician narratives. It’s used for information extraction, unstructured data conversion to structured data, and data/document categorization.

NLP uses classifications to infer meaning from unstructured text, enabling clinicians to use natural language instead of rigid input formats. NLP analyzes EMR data, gathering large-scale information on late-stage complications of medical conditions [26].

NLP offers substantial benefits in various healthcare areas, including [36]:

- Efficient billing: Extracting information from physician notes to assign medical codes for billing.

- Authorization approval: Using physician notes to prevent delays and administrative errors.

- Clinical decision support: Facilitating decision-making for healthcare teams (e.g., predicting patient prognosis).

- Medical policy assessment: Compiling clinical guidance and formulating care guidelines.

Disease classification using medical notes and ICD (International Statistical Classification of Diseases and Related Health Problems) codes is an NLP application. ICD, managed by the WHO, codes diseases, symptoms, findings, circumstances, and disease causes. For example, NLP algorithms can extract and identify ICD codes from clinical guidelines. Unstructured text is organized into structured data by parsing relevant clauses and classifying ICD-10 codes based on frequency. Algorithm thresholds are adjusted to improve accuracy, and data is aggregated for final output ( Fig. 2.6 ).

Figure 2.6.

Example of ICD-10 mapping from a clinical guidelines’ description [36].

Integrating Personal Health Records for Holistic Insights

EMRs have created vast patient information databases, enabling the identification of healthcare trends across diseases. EMR databases contain hospital encounters, diagnoses, interventions, lab results, medical images, and clinical narratives. These datasets can build predictive models aiding diagnostics and treatment decisions. As AI matures, it can extract information on disease correlations and links between past and future medical events [37]. Data often missing is between-intervention and between-visit data when patients are well or asymptomatic. This data could help create end-to-end “health” and “disease” models for long-term effects and disease classification studies.

While AI applications for EMRs are still limited, the potential for trend detection and health outcome prediction is immense. Current applications include text narrative data extraction, predictive algorithms based on medical test data, and clinical decision support using medical history. AI also has great potential to integrate EMR data with health applications. Current AI healthcare applications are often standalone, used for medical image diagnostics and remote patient monitoring for disease prediction [38]. Integrating these with EMR data could enhance value by adding personal medical history and statistical reference libraries, improving classification and prediction accuracy. EMR providers like Cerner, Epic, and Athena are adding AI functionalities like NLP, simplifying data access and extraction [39]. This could facilitate telehealth and remote monitoring integration with EMR data, enabling bidirectional data transfer, including remote monitoring data in EMR systems.

Numerous EMR providers and systems exist globally, using diverse operating systems and approaches. Interoperability is crucial for maximizing data value. International efforts like Observational Health Data Science and Informatics (OHDSI) are gathering EMR data across countries, consolidating 1.26 billion patient records from 17 countries [40]. AI methods, primarily NLP, DL, and neural networks, are used to extract, classify, and correlate EMR data.

DeepCare, an AI platform for end-to-end EMR data processing, uses a deep dynamic memory neural network to store experiences in memory cells. The system’s long short-term memory models illness trajectories and healthcare processes through time-stamped event sequences, capturing long-term dependencies [41]. DeepCare’s framework can model disease progression, recommend interventions, and provide disease prognoses based on EMR databases. Studies on diabetic and mental health patient cohorts demonstrated DeepCare’s ability to predict disease progression, optimal interventions, and readmission likelihood [37].

Robotics and AI-Powered Devices: Transforming Patient Care

Robots are increasingly used in healthcare to replace human workforce, augment human abilities, and assist healthcare professionals. Applications range from surgical robots for laparoscopic procedures and rehabilitation robots to robotic assistants integrated into implants and prosthetics, and robots assisting physicians and staff. Companies are developing robots, particularly for patient interaction and enhancing human-machine connection in care. Most robots under development incorporate AI for improved performance in classification, language recognition, image processing, and more.

Minimally Invasive Surgery: Precision and Reduced Trauma

While surgical outcomes have improved, traditional surgery often remains a relatively low-tech practice using hand tools and instruments for “cutting and sewing.” Conventional surgery relies heavily on surgeons’ tactile senses to differentiate tissues and organs, often requiring open surgery. Surgical technology is undergoing transformation, focusing on minimally invasive procedures that minimize incisions, reduce open surgeries, and utilize flexible tools and cameras [42]. Minimally invasive surgery is seen as the future, but is still early-stage, requiring improvements to enhance patient experience and reduce time and cost. It demands different motor skills due to reduced tactile feedback. Sensors providing finer tactile stimuli are being developed, using tactile data processing and AI, specifically neural networks, to translate sensor input into perceivable stimuli [43]. Artificial tactile sensing offers advantages over physical touch, including larger reference libraries for sensation comparison and standardization across surgeons regarding quantitative features, continuous improvement, and training levels.

Artificial tactile sensing is used in breast cancer screening as a clinical breast exam replacement, complementing imaging techniques like mammography and MRI. The system is built on mechanical tissue measurement reconstruction using pressure sensors. Neural network training adjusts input data weights to achieve desired outputs [44]. The tactile sensory system detects mass calcifications in breast tissue through palpation and comparison with reference data, determining tissue abnormalities. Artificial tactile sensing is also used for assessing liver, brain, and submucosal tumors [45].

Neuroprosthetics: Restoring Function and Enhancing Life

Humanity has long sought immortality and enhancement. Neuroprosthetics, devices augmenting the nervous system for input and output, are moving closer to this aspiration. Augmentation often involves electrical stimulation to address neurological deficits.

Debilitating conditions can impair hearing, vision, cognitive, sensory, or motor skills, leading to comorbidities. Movement disorders like multiple sclerosis and Parkinson’s are progressive, causing gradual decline in these skills while maintaining patient consciousness. Brain-machine interface (BMI) advances show promise. Systems can learn and store subjects’ intended goal-directed wishes (EEG) when users “train” an intelligent controller (AI). This training identifies errors in tasks deemed incorrect by the user. Correct actions are stored, and error-related brain signals are registered by AI for future action correction. This “reinforcement learning” allows storing single to multiple control “policies” for patient personalization [[48]](#bib48]. Neuralink aims to integrate materials science, robotics, electronics, and neuroscience to solve complex health problems [[49]](#bib49].

While still exploratory, neuroprosthetics will be invaluable for patients with neurodegenerative diseases, increasingly reliant on these devices throughout their lives.

Ambient Assisted Living: Promoting Independence and Well-being

With aging populations, more individuals live longer with chronic disorders, often maintaining independent living into old age. Data indicates that half of those over 65 have some disability, totaling over 35 million in the US alone. Most people desire autonomy and control over their lives even in old age [50]. Assistive technologies enhance patient self-reliance, promoting ICT tool use for remote care and information sharing with healthcare professionals. Assistive technology adoption is rapidly growing, especially among those aged 65–74 [51]. Governments, industries, and organizations promote ambient assisted living (AAL), enabling independent living at home. AAL aims to promote healthy lifestyles for at-risk individuals, increase elderly autonomy and mobility, and enhance security, support, and productivity to improve quality of life. AAL applications typically gather data via sensors and cameras, applying AI tools to develop intelligent systems [52]. Smart homes and assistive robots are key AAL implementations.

Smart Homes: Intelligent Living Environments

Smart homes are residential homes augmented with sensors and monitoring tools to facilitate residents’ lives. Popular AAL applications integrated into smart homes or used individually include remote monitoring, reminders, alarms, behavior analysis, and robotic assistance.

Smart homes are beneficial for dementia patients. Low-cost sensors in Internet of Things (IoT) architectures can detect abnormal behavior. Sensors in bedrooms, kitchens, and bathrooms enhance safety. Oven sensors remind patients to switch off cookers. Rain sensors alert to open windows during rain. Bath and lamp sensors ensure bathroom lights are off [53].

Sensors transmit data to local computing devices or cloud platforms for processing via machine learning algorithms, alerting relatives or healthcare professionals when necessary ( Fig. 2.7 ). Daily data collection defines activities of daily living, enabling abnormality detection as deviations from routine. Machine learning algorithms used in smart homes include probabilistic and discriminative methods like Naive Bayes classifiers, Hidden Markov Models, support vector machines, and artificial neural networks [54].

Figure 2.7.

Process diagram of a typical smart home or smart assistant setup.

Markov Logic Networks have been used for activity recognition, modeling simple and complex activities and generating appropriate alerts for patient abnormalities [55]. Markov Logic Networks handle uncertainty modeling and domain knowledge within a single framework, modeling factors influencing patient abnormality. Uncertainty modeling is crucial for dementia patients whose activities are often incomplete. Domain knowledge of patient lifestyle and medical history enhances activity recognition and decision-making. This machine learning framework detects abnormalities with contextual factors like object, space, time, and duration to support safe action decisions. Different alarm levels are used, from low-level reminders for routine activities to high-level alarms for falls requiring caretaker intervention. Activity monitoring aims to support healthcare practitioners in quantitatively and objectively identifying cognitive function symptoms, diagnosis, and prognosis using smart home systems [56]. Other assistive devices for dementia patients include motion detectors, electronic medication dispensers, and tracking robots.

Assistive Robots: Physical and Emotional Support

Assistive robots support the physical limitations of the elderly and disabled, assisting in daily activities and acting as extra hands or eyes. They aid mobility, housekeeping, medication management, eating, grooming, bathing, and social communication. RIBA, an assistive robot with human-like arms, helps lift and move patients. It can transfer patients between beds and wheelchairs. Instructions can be given via tactile sensors using tactile guidance teaching methods [57].

The MARIO project (Managing active and healthy Aging with use of caring Service robots) focuses on loneliness, isolation, and dementia in the elderly. The MARIO Kompaï companion robot aims to provide real feelings and emotions to improve acceptance by dementia patients, support dementia assessment tests, and promote user interaction. Developed by Robosoft, the Kompaï robot includes cameras, Kinect motion sensors, and LiDAR for navigation and object identification [[58]](#bib58]. It also features speech recognition and other interface technologies, supporting diverse robotic applications on a single platform, similar to smartphone apps. Robotic apps focus on cognitive stimulation, social interaction, and general health assessment. Many apps use AI tools to process robot-collected data for facial recognition, object identification, language processing, and diagnostic support [59].

Cognitive Assistants: Enhancing Mental Function

Many elderly individuals experience cognitive decline, struggling with problem-solving, attention, and memory. Cognitive stimulation is a common rehabilitation approach after brain injuries from stroke, multiple sclerosis, trauma, and mild cognitive impairments. Cognitive stimulation can reduce cognitive impairment and be trained using assistive robots.

Virtrael is a cognitive stimulation platform for assessing, stimulating, and training declining cognitive skills. Virtrael uses visual memory training with three key functionalities: configuration, communication, and games. Configuration mode matches patients with therapists and allows program configuration. Communication tools facilitate patient-therapist and patient-patient communication. Games train cognitive skills like memory, attention, and planning ( Fig. 2.8 ) [60].

Figure 2.8.

Example of games used for training cognitive skills of patients [60].

Social and Emotional Stimulation: Companion Robots

Companion robots for social and emotional stimulation are among the earliest and most studied assistive robot applications. These robots help elderly patients with stress and depression by providing emotional connection, social interaction, and daily task assistance. Ranging from pet-like to peer-like designs, they are interactive and offer psychological and social benefits. PARO, a robotic baby seal, is the most widely used robotic pet, featuring sensors for touch, sound, and vision [61]. Mario Kampäi assists elderly dementia patients with loneliness and isolation. Buddy, from Blue Frog Robotics, helps with daily activities, medication and appointment reminders, and fall/inactivity detection using motion sensors. Studies on cognitive stimulation suggest a decrease in cognitive decline and dementia progression rates.

AI in Healthcare: A Vision of the Future

AI is increasingly integral to our lives, from smartphones and cars to, crucially, healthcare. This technology will continue to push boundaries and challenge long-held norms, significantly augmenting established healthcare practices.

AI: Near-Term and Long-Term Impact

AI, particularly machine learning, is poised to play a crucial role in future healthcare. It is the driving force behind precision medicine, widely recognized as a critical advancement in care. While early diagnostic and treatment recommendation efforts have been challenging, AI is expected to master these areas. Given rapid progress in AI-driven image analysis, machines will likely examine most radiology and pathology images. Speech and text recognition are already used for patient communication and clinical note capture, with increasing application.

The main challenge for AI in healthcare is not technological capability but clinical practice adoption. Widespread adoption requires regulatory approvals, EHR system integration, standardization, clinician training, payer reimbursement, and ongoing updates. Overcoming these challenges will take longer than technology maturation. Consequently, limited AI use in clinical practice is expected within 5 years, with more extensive use within 10 years.

AI systems are unlikely to replace clinicians but will augment their patient care efforts. Clinicians may shift towards tasks leveraging uniquely human skills like empathy, persuasion, and holistic integration. Healthcare providers who resist working alongside AI may face career risks.

Success Factors for AI in Healthcare

A review by Becker [62] suggests AI in healthcare can aid clinicians, patients, and other healthcare workers in four key ways. These suggestions inspire the following expanded contributions to successful AI implementation in healthcare ( Fig. 2.9 ):

- Disease onset and treatment success assessment.

- Complication management or alleviation.

- Patient-care assistance during treatment or procedures.

- Research for disease discovery and treatment.

Figure 2.9.

The likely success factors depend largely on the satisfaction of the end users and the results that the AI-based systems produce.

Assessing Health Conditions with AI

Individuals are increasingly demanding greater control over condition prediction and assessment, driven by technological advancements and the belief that technology can aid healthy living. While not all answers lie in technology, it is a promising field.

Mood and mental health conditions are critically important. The WHO estimates that one in four people globally experience such conditions, accelerating ill-health and comorbidities. Machine learning algorithms are being developed to detect speech patterns indicating mood disorders. MIT-based research using neural networks detects early depression signs through speech analysis. The “model sees sequences of words/speaking style” to identify patterns indicative of depression [63]. Sequence modeling feeds audio and text from patients with and without depression to the system, accumulating data and pairing text patterns with audio signals. For example, words like “low,” “blue,” and “sad” can be linked to monotone audio. Speech speed and pause length also play a role in depression detection. Fig. 2.10 illustrates how tone and words in a 60-second period can estimate emotion.

Figure 2.10.

Early detection of certain mood conditions can be predicted by analyzing the trend, tone of voice, and speaking style of individuals.

Managing and Alleviating Complications with AI

While mild illness complications are often tolerated, managing symptoms is crucial for certain conditions to prevent severe developments. Infectious diseases exemplify this. Research suggests understanding trauma patient microbiological niches (biomarkers) could predict wound infections, enabling preventative measures [64]. Machine learning can also predict serious complications like neuropathy in type 2 diabetes or early cardiovascular irregularities. Models aiding clinicians in detecting postoperative infections can improve system efficiency [65].

AI for Patient-Care Assistance

Patient-care assistance technologies can improve clinician workflows and enhance patient autonomy and well-being. Treating each patient as an independent system allows for bespoke approaches based on available data, particularly important for the elderly and vulnerable. Virtual health assistants can remind patients to take medications and recommend exercise for optimal outcomes. Affective Computing, enabling machines to process and interpret human emotions, can significantly contribute. Patients can remotely interact with devices, access biometric data, and feel supported by an empathetic system. This can be applied at home and in hospitals to reduce healthcare worker pressure and improve service.

Medical Research Accelerated by AI

AI can accelerate diagnosis and medical research. Biotech, MedTech, and pharmaceutical companies are increasingly partnering to accelerate drug discovery, driven by societal need and expertise scarcity. AI-driven collaboration is key in areas with high research costs and unmet treatment needs. A breakthrough in antibiotic discovery exemplifies this: researchers trained a neural network to “learn” molecule properties and identify those inhibiting E. coli growth [66]. Another example is COVID-19 pandemic research. Predictive Oncology launched an AI platform to accelerate diagnostics and vaccine production using over 12,000 computer simulations per machine, combined with DL to find molecules disrupting viral replication [67], [68].

The Digital Primary Physician: AI-Augmented Care

Imagine walking into a primary care physician’s room. After pleasantries, the doctor inquires about your health. As a patient with multiple conditions – sciatica, snapping hip syndrome, high cholesterol, high blood pressure, and chronic sinusitis – you prioritize chronic sinusitis due to limited appointment time [69]. The doctor asks questions, types notes, examines you briefly, writes a prescription, and schedules a follow-up. Other conditions might require separate appointments, depending on consultation time limits.

This common scenario, while helpful, is not ideal and can leave patients feeling dissatisfied. This frustration puts pressure on healthcare workers and needs to be addressed. Health applications combining AI and remote physicians can answer simple questions, potentially avoiding physical doctor visits.

AI-Powered Prequalification (Triage)

AI bots can pre-qualify symptoms to determine if physician consultation is needed. AI asks patients questions, guiding actions based on responses. Medical professionals rigorously review questions and answers for accuracy. In critical cases, a “See a doctor” response directs patients to primary care physician appointments.

Remote Digital Visits: Expanding Access

Remote video consultations between patients and physicians are a key innovation. Patients book appointments, often same-day, allowing ample time for physicians to review patient information (images, text, video, audio) before consultations. This expands access, particularly for those lacking time or resources for in-person visits, and enables remote physician work.

The Future of Primary Care: Integration and Evolution

While acknowledging AI’s potential benefits, practitioners are skeptical about its future role in primary care, citing lack of empathy and ethical concerns [70]. While valid today, assuming AI will remain stagnant is naive. Humanity seeks efficient, effective solutions that streamline daily life. Combined with smart healthcare material breakthroughs [71] and AI advancements, patients could manage many conditions at home, contacting healthcare workers for specialized needs. Remote interaction technologies are crucial during epidemics, disasters, or when patients are away from home. The SARS-COV-2 pandemic underscores the need for remote healthcare to save lives and reduce burdens on healthcare workers and patients.

References

[References]

(Note: The reference list from the original article should be included here, maintaining the original numbering and links if possible. If creating a new markdown document, ensure the reference links are correctly formatted.)